FACT SHEET: Vice President Harris Announces OMB Policy to Advance Governance, Innovation, and Risk Management in Federal Agencies’ Use of Artificial Intelligence

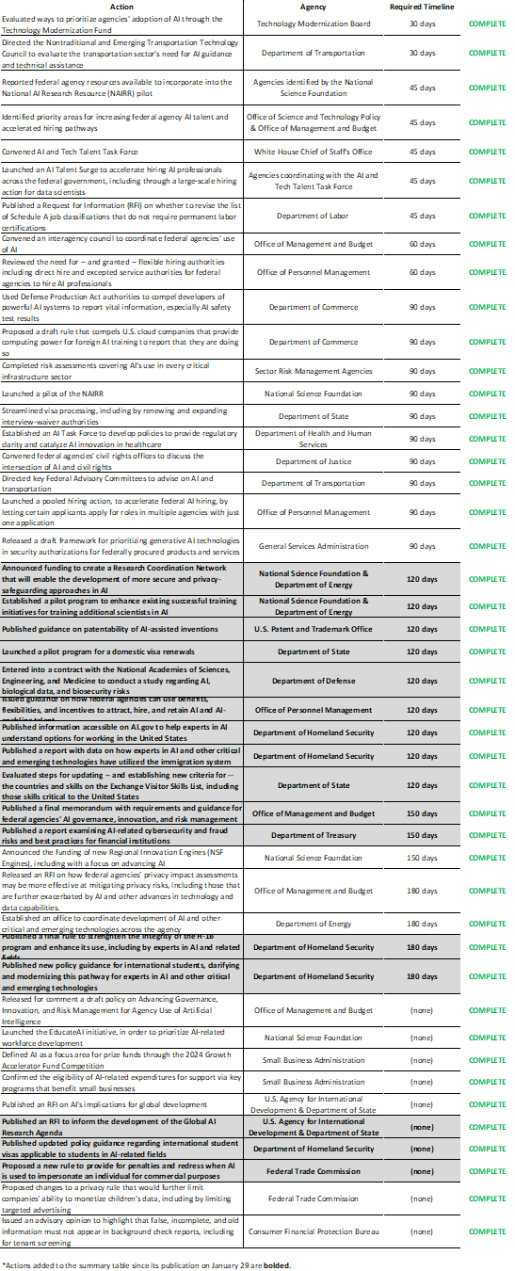

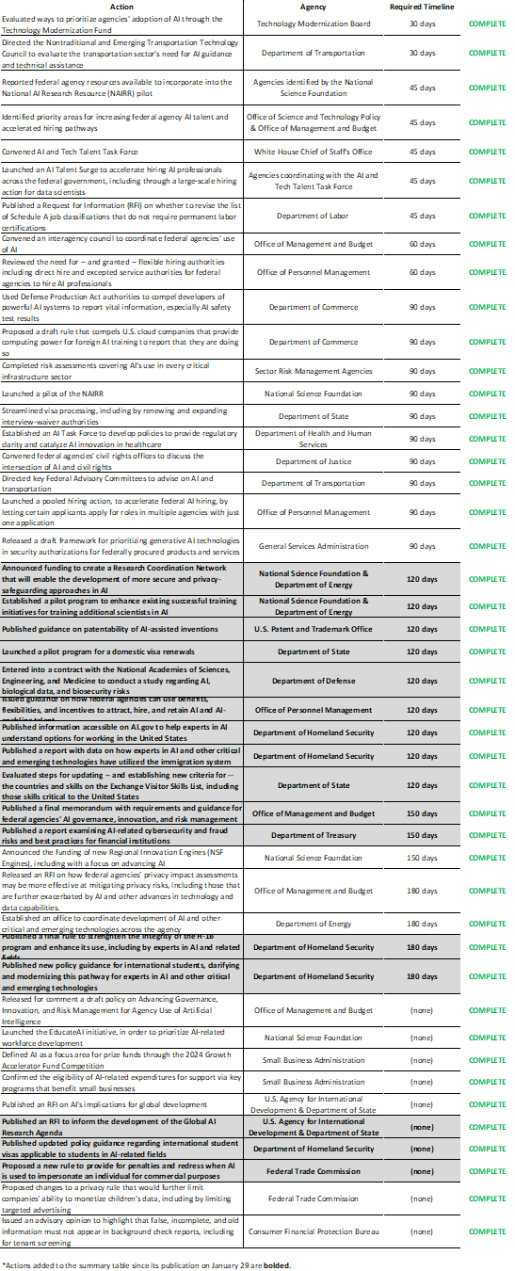

Administration announces completion of 150-day actions tasked by President Biden’s landmark Executive Order on AI

Today, Vice President Kamala Harris announced that the White House Office of Management and Budget (OMB) is issuing OMB’s first government-wide policy to mitigate risks of artificial intelligence (AI) and harness its benefits – delivering on a core component of the President Biden’s landmark AI Executive Order. The Order directed sweeping action to strengthen AI safety and security, protect Americans’ privacy, advance equity and civil rights, stand up for consumers and workers, promote innovation and competition, advance American leadership around the world, and more. Federal agencies have reported that they have completed all of the 150-day actions tasked by the E.O, building on their previous success of completing all 90-day actions.

This multi-faceted direction to Federal departments and agencies builds upon the Biden-Harris Administration’s record of ensuring that America leads the way in responsible AI innovation. In recent weeks, OMB announced that the President’s Budget invests in agencies’ ability to responsibly develop, test, procure, and integrate transformative AI applications across the Federal Government.

In line with the President’s Executive Order, OMB’s new policy directs the following actions:

Address Risks from the Use of AI

This guidance places people and communities at the center of the government’s innovation goals. Federal agencies have a distinct responsibility to identify and manage AI risks because of the role they play in our society, and the public must have confidence that the agencies will protect their rights and safety.

By December 1, 2024, Federal agencies will be required to implement concrete safeguards when using AI in a way that could impact Americans’ rights or safety. These safeguards include a range of mandatory actions to reliably assess, test, and monitor AI’s impacts on the public, mitigate the risks of algorithmic discrimination, and provide the public with transparency into how the government uses AI. These safeguards apply to a wide range of AI applications from health and education to employment and housing.

For example, by adopting these safeguards, agencies can ensure that:

- When at the airport, travelers will continue to have the ability to opt out from the use of TSA facial recognition without any delay or losing their place in line.

- When AI is used in the Federal healthcare system to support critical diagnostics decisions, a human being is overseeing the process to verify the tools’ results and avoids disparities in healthcare access.

- When AI is used to detect fraud in government services there is human oversight of impactful decisions and affected individuals have the opportunity to seek remedy for AI harms.

If an agency cannot apply these safeguards, the agency must cease using the AI system, unless agency leadership justifies why doing so would increase risks to safety or rights overall or would create an unacceptable impediment to critical agency operations.

To protect the federal workforce as the government adopts AI, OMB’s policy encourages agencies to consult federal employee unions and adopt the Department of Labor’s forthcoming principles on mitigating AI’s potential harms to employees. The Department is also leading by example, consulting with federal employees and labor unions both in the development of those principles and its own governance and use of AI.

The guidance also advises Federal agencies on managing risks specific to their procurement of AI. Federal procurement of AI presents unique challenges, and a strong AI marketplace requires safeguards for fair competition, data protection, and transparency. Later this year, OMB will take action to ensure that agencies’ AI contracts align with OMB policy and protect the rights and safety of the public from AI-related risks. The RFI issued today will collect input from the public on ways to ensure that private sector companies supporting the Federal Government follow the best available practices and requirements.

Expand Transparency of AI Use

The policy released today requires Federal agencies to improve public transparency in their use of AI by requiring agencies to publicly:

- Release expanded annual inventories of their AI use cases, including identifying use cases that impact rights or safety and how the agency is addressing the relevant risks.

- Report metrics about the agency’s AI use cases that are withheld from the public inventory because of their sensitivity.

- Notify the public of any AI exempted by a waiver from complying with any element of the OMB policy, along with justifications for why.

- Release government-owned AI code, models, and data, where such releases do not pose a risk to the public or government operations.

Today, OMB is also releasing detailed draft instructions to agencies detailing the contents of this public reporting.

Advance Responsible AI Innovation

OMB’s policy will also remove unnecessary barriers to Federal agencies’ responsible AI innovation. AI technology presents tremendous opportunities to help agencies address society’s most pressing challenges. Examples include:

- Addressing the climate crisis and responding to natural disasters. The Federal Emergency Management Agency is using AI to quickly review and assess structural damage in the aftermath of hurricanes, and the National Oceanic and Atmospheric Administration is developing AI to conduct more accurate forecasting of extreme weather, flooding, and wildfires.

- Advancing public health. The Centers for Disease Control and Prevention is using AI to predict the spread of disease and detect the illicit use of opioids, and the Center for Medicare and Medicaid Services is using AI to reduce waste and identify anomalies in drug costs.

- Protecting public safety. The Federal Aviation Administration is using AI to help deconflict air traffic in major metropolitan areas to improve travel time, and the Federal Railroad Administration is researching AI to help predict unsafe railroad track conditions.

Advances in generative AI are expanding these opportunities, and OMB’s guidance encourages agencies to responsibly experiment with generative AI, with adequate safeguards in place. Many agencies have already started this work, including through using AI chatbots to improve customer experiences and other AI pilots.

Grow the AI Workforce

Building and deploying AI responsibly to serve the public starts with people. OMB’s guidance directs agencies to expand and upskill their AI talent. Agencies are aggressively strengthening their workforces to advance AI risk management, innovation, and governance including:

- By Summer 2024, the Biden-Harris Administration has committed to hiring 100 AI professionals to promote the trustworthy and safe use of AI as part of the National AI Talent Surge created by Executive Order 14110 and will be running a career fair for AI roles across the Federal Government on April 18.

- To facilitate these efforts, Office of Personnel Management has issued guidance on pay and leave flexibilities for AI roles, to improve retention and emphasize the importance of AI talent across the Federal Government.

- The Fiscal Year 2025 President’s Budget includes an additional $5 million to expand General Services Administration’s government-wide AI training program, which last year had over 4,800 participants from across 78 Federal agencies.

Strengthen AI Governance

To ensure accountability, leadership, and oversight for the use of AI in the Federal Government, the OMB policy requires federal agencies to:

- Designate Chief AI Officers, who will coordinate the use of AI across their agencies. Since December, OMB and the Office of Science and Technology Policy have regularly convened these officials in a new Chief AI Officer Council to coordinate their efforts across the Federal Government and to prepare for implementation of OMB’s guidance.

- Establish AI Governance Boards, chaired by the Deputy Secretary or equivalent, to coordinate and govern the use of AI across the agency. As of today, the Departments of Defense, Veterans Affairs, Housing and Urban Development, and State have established these governance bodies, and every CFO Act agency is required to do so by May 27, 2024.

In addition to this guidance, the Administration announcing several other measures to promote the responsible use of AI in Government:

- OMB will issue a request for information (RFI) on Responsible Procurement of AI in Government, to inform future OMB action to govern AI use under Federal contracts;

- Agencies will expand 2024 Federal AI Use Case Inventory reporting, to broadly expand public transparency in how the Federal Government is using AI;

- The Administration has committed to hire 100 AI professionals by Summer 2024 as part of the National AI Talent Surge to promote the trustworthy and safe use of AI.

With these actions, the Administration is demonstrating that Government is leading by example as a global model for the safe, secure, and trustworthy use of AI. The policy announced today builds on the Administration’s Blueprint for an AI Bill of Rights and the National Institute of Standards and Technology (NIST) AI Risk Management Framework, and will drive Federal accountability and oversight of AI, increase transparency for the public, advance responsible AI innovation for the public good, and create a clear baseline for managing risks.

It also delivers on a major milestone 150 days since the release of Executive Order 14110, and the table below presents an updated summary of many of the activities federal agencies have completed in response to the Executive Order.

###

link